The debate around AI-generated content has been ongoing since the emergence of ChatGPT.

Google’s position is that they ‘focus on the quality of content, rather than how content is produced’ and it is ‘rewarding high-quality content, however it is produced’ and that, perhaps, most importantly ‘appropriate use of AI or automation is not against our guidelines’.

Nevertheless, Google has drawn a firm line on how AI is used. The September 2023 helpful content update and subsequent core updates hit sites that scaled thin AI content hard, particularly those using programmatic approaches to generate hundreds or thousands of pages with minimal human oversight.

The distinction isn’t whether AI was involved; it’s whether the content was created to genuinely help users or to manipulate search rankings at volume. A well-researched article drafted with AI assistance and refined by a subject matter expert sits in a fundamentally different category to a batch of 500 auto-generated city pages with swapped keywords.

For that reason, content created with AI assistance is evaluated under the same content guidelines as all other methods. These guidelines cover quality signals like E-E-A-T (Experience, Expertise, Authoritativeness, Trustworthiness), and have been reinforced through several core and helpful content updates since 2022.

This article covers how businesses can build out their own AI-assisted content production workflow which still meets the necessary thresholds for helpful content.

What a responsible AI content workflow looks like in practice

Creating a responsible AI-assisted content workflow requires a clearly defined process and strong quality controls. Without that foundation, most people fall back on ad-hoc prompting in tools like ChatGPT and refine the output from there.

The following process helps establish a structured workflow that can cover around 80% of the content process. The remaining 20% still relies on human input to refine the tone, verify the facts, and strip out AI writing patterns.

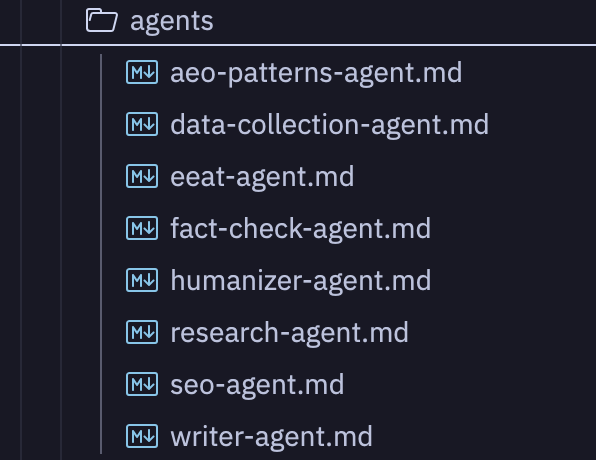

The research agents

First and foremost, the key objective throughout this process is recognising that AI needs context and a clear understanding of the intended outcome. As with any AI system, the quality of input will always determine the quality of output.

To support this, we use AI agents, which are essentially structured instruction sets, that define how an AI should behave when performing a specific task. To ensure AI can operate with a consistent purpose, agents combine the following: a clear brief, rules for how information should be processed, and access to specific tools or data sources.

These are integrated with MCP (Model Context Protocol) servers, which are connections that give Large Language Models access to external tools and live data sources allowing AI to query a tool directly, retrieve structured results, and use them in the workflow.

We use several MCP’s across our pipeline: DataForSEO for live SERP data, keyword volumes, and backlink metrics; Firecrawl for scraping and extracting content from competitor pages; and Perplexity API for broader research queries, as we deem this the best LLM platform for automated online research. These are configured at the environment level and made available to whichever agents need them.

If the goal is to produce topical content that search engines can understand and surface, SEO and topic research agents should be involved from the outset to help build the foundation of the article.

Our approach towards intent underpins both of these principles.

Gathering SERP data, keyword variations, and competitor content using MCP tools such as DataForSEO and Firecrawl can help establish the SEO foundation for an article by scraping and analysing competitor pages.

Topic research can then be conducted through MCP tools like Perplexity, surfacing real user pain points from Reddit and forum discussions, gathering statistics from primary sources, and identifying angles that existing content has not yet covered.

With this information, you can develop topic clusters that provide a strong foundation for the content.

Who are you writing for

A key detail to feed into your agents is defining who you are writing for to encourage understanding on how best to frame the basis of what you are trying to write.

Prior to actually drafting any content, we must provide the below information to the agents:

- Who is the reader?

- What do they already know?

- What problem brought them here?

- What will they do with this information?

Remember, context is key, and the more we can communicate relevant details to the agents, the better they are going to be in producing our content.

What other context can you feed into it

In addition to the above, any other information around the topic such as insights or other similar blog content you have previously written, is key in helping the agents to further understand the topic and our perspective on it.

Similarly, feeding in links to your website and providing information on the tone of voice for the article can considerably help. We created a voice guide by analysing 35 of our published articles and extracted the specific vocabulary, sentence patterns, tone characteristics, and structural conventions that define how we write.

Fundamentally, the agents will carry out the gathering of data, yet we still need to direct them on what to look for, which angles to pursue, and which internal resources to draw from.

The next phase

Once we have built the foundation for the content, and determined the topic and title, we can set up the agents to do their thing.

The SEO agent

A dedicated SEO agent maps target keywords (found via the DataForSEO MCP), identifies the expected entities for a given topic, produces a content outline with schema markup recommendations and constructs an outline of the proposed Header tags.

This ensures the article is structured around the recognised concepts for the topic before a word of prose is written.

The writer agent

The writer agent receives the full research output, keyword strategy, content outline and voice guide and pairs this with the audience persona that it has already built out to produce the first draft of the content.

The E-E-A-T scoring agent

The first draft of the content is then scrutinised by the E-E-A-T scoring agent who evaluates the draft against this seven weighted criteria:

- Authorship and expertise

- Citation quality

- Content effort

- Originality

- Page intent

- Subjective quality

- Writing quality

Any content scoring below 60 (out of 100) is sent back for revision and the process is repeated until it reaches above this score to move to the humaniser agent.

The humaniser agent

The humanisation agent strips AI writing patterns such as:

- Structural tells like antithetical section closers

- Compulsive pattern-of-three groupings

- Orphaned punchy sentences

- Generic phrasing

The fact checker agent

Our final agent in the process is our fact checker agent. This verifies every claim against primary sources, validates all links, and flags anything that can’t be substantiated.

Once this agent has done its work, the article is ready for proofing.

The human

Ultimately, the agents are not in place to perfect the content, but are designed to take the manual work out of the process.

As stated earlier, Google demands quality of content and it is down to the marketing team to review what has been written, flag any inconsistencies, refine the content, and ensure that it is answering the intended questions correctly.

Future content and beyond

One of the key rationales behind creating a scalable content workflow is being able to feed back information to your agents on what has worked well and what has not.

On completion of our articles, we are able to inform the agents of the reasons behind the changes. This helps them understand our frustrations and blockers to make sure for the next article, it is baked into its process.

Fundamentally, AI can take a lot of the heavy lifting out of content writing by helping with the research and the base structure to build out draft content. However, this needs to be used with care and governance. This is where the role of humans can be optimised to focus on ensuring quality content is produced to perform in search engines and LLM’s.